YOLOv5m benchmark Error

I'm trying to quantize yolov5m using OwLite on docker environment. However, an error that cannot be benchmarked occurs as below. The same error continued to occur even after 3 turns. The calibration option used for PTQ quantization is mse. Even the onnx model with quantization was created, but the engine file could not be created. I referred to the example in Github of the ollite. I wonder why I can't benchmark it.

It's hard to make a mini refroducer to generate the error, so I'll attach a part of yolov5's ‘train.py’ error.

Enviorment:

- Ubuntu 22.04.4

- owlite: 2.1.0

- pytorch: 2.1.2

- python: 3.10.12

code:

if opt.owlite_ptq or not opt.owlite_qat:

# Benchmark the model using OwLite

owl.export(de_parallel(owlite_model))

owl.benchmark()Traceback:

OwLite [ERROR] Benchmarking failed during benchmark. Please try again, and if the problem persists, please report the issue at https://tally.so/r/mOl5Zk for further assistance

Traceback (most recent call last):

File "/workspace/owlite-examples/object-detection/owlite_YOLOv5/YOLOv5/train.py", line 701, in <module>

main(opt)

File "/workspace/owlite-examples/object-detection/owlite_YOLOv5/YOLOv5/train.py", line 590, in main

train(opt.hyp, opt, device, callbacks)

File "/workspace/owlite-examples/object-detection/owlite_YOLOv5/YOLOv5/train.py", line 291, in train

owl.benchmark()

File "/usr/local/lib/python3.10/dist-packages/owlite/owlite.py", line 702, in benchmark

self.target.orchestrate_benchmark(download_engine=download_engine)

File "/usr/local/lib/python3.10/dist-packages/owlite/api/benchmarkable.py", line 193, in orchestrate_benchmark

self.poll_benchmark(wait_for_the_results=download_engine)

File "/usr/local/lib/python3.10/dist-packages/owlite/api/benchmarkable.py", line 382, in poll_benchmark

raise RuntimeError("Benchmarking failed")

RuntimeError: Benchmarking failed-

Hello Emily. Thank you for using OwLite and for reporting the issue you experienced.

It seems you encountered an unexpected error, which must have caused inconvenience while using OwLite. Thank you for providing detailed information about the environment in which the issue occurred.

I have just notified our development team about your request, and they will get back to you soon with a response.

Thank you!

0 -

Dear Emily,

Thank you for choosing OwLite and for bringing this issue to our attention.

You mentioned that you received an ONNX model with quantization through OwLite. To further troubleshoot, we recommend attempting to build the model directly using thetrtexec --int8option within your local TensorRT Docker environment, using the quantized ONNX model downloaded from OwLite. For detailed instructions on building withtrtexec, please refer to the following link.

We appreciate your patience and encourage you to reach out if you need further assistance.1 -

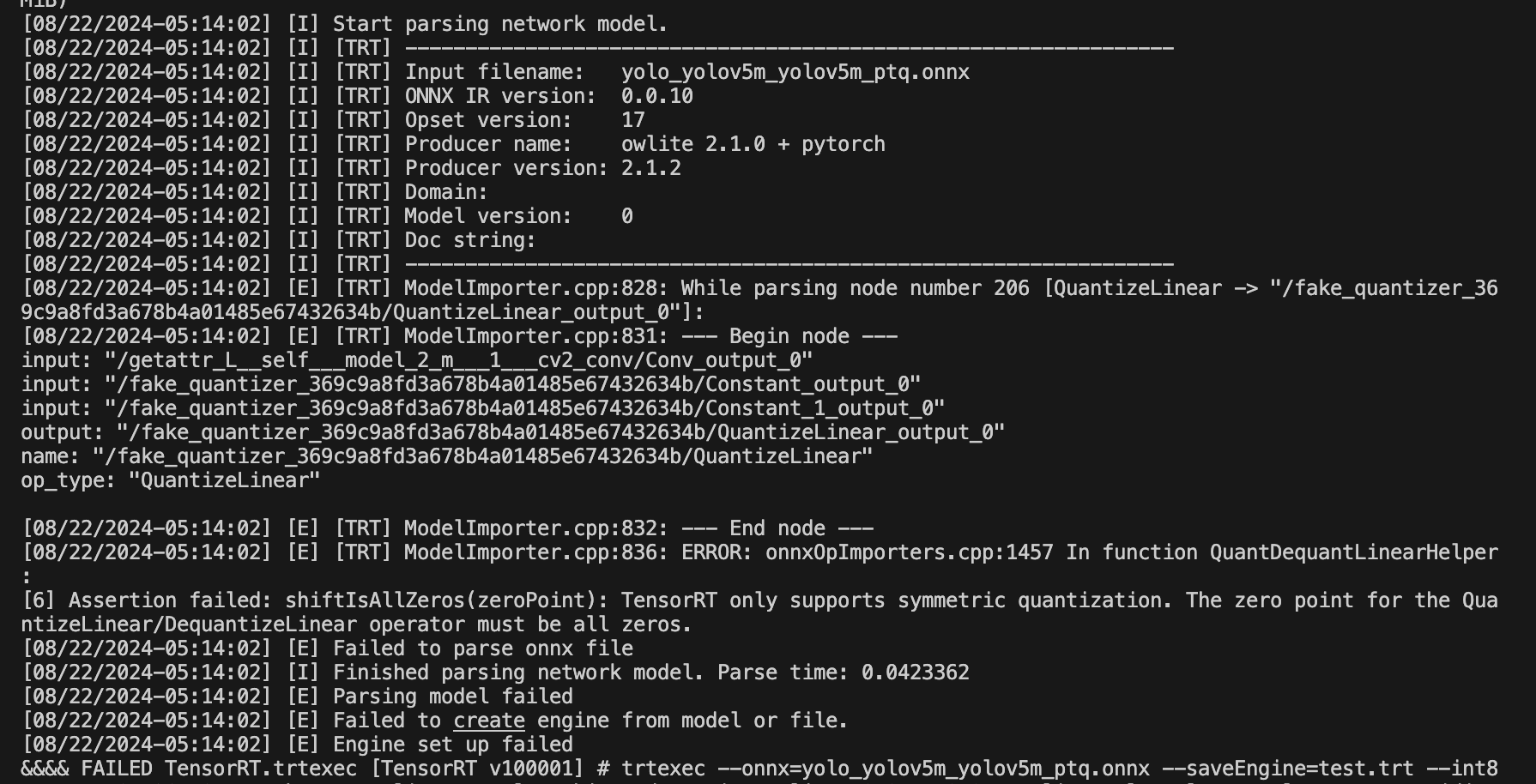

I did running

trtexecfor your advice. But I got the error below. As a result, the engine file could not be created using trtexec. The command I used was “trtexec --onnx=yolo_yolov5m_yolov5m_ptq.onnx --saveEngine=test.trt --int8”. 0

0 -

Dear Emily,

It appears that your ONNX model is currently invalid. The error message you encountered, “TensorRT only supports symmetric quantization,” suggests that certain nodes in your model have the 'symmetric' option set to 'false' when you applied compression through OwLite. Since TensorRT only supports symmetric quantization, I recommend adjusting the compression option tosymmetric: trueand regenerating the PTQ quantized model.

To assist with this, you can easily locate the problematic nodes by searching for 'symmetric: false' using Ctrl+F (or Command+F on Mac). This will help you identify and correct the relevant nodes.

Thank you for your patience while we investigated this issue.

Best regards, Jiyun Park.1 -

Thank you for your advice. I followed your advice and changed the option from symmetric:false to True. And then I got a right engine and the benchmark worked. I appreciate your kind and quick response.

0

Please sign in to leave a comment.

Comments

5 comments